Configuration Authenticating to S3 and Redshift If you plan to perform several queries against the same data in Redshift, Databricks recommends saving the extracted data using Delta Lake. Query execution may extract large amounts of data to S3. Recommendations for working with Redshift Write back to a table using IAM Role based authentication the data source API to write the data back to another table After you have applied transformations to the data, you can use option("forward_spark_s3_credentials", true) option("dbtable", "schema-name.table-name") /* if schema-name is not specified, default to "public". option("port", "port") /* Optional - will use default port 5439 if not specified. Read data from a table using Databricks Runtime 11.3 LTS and above Scala // Read data from a table using Databricks Runtime 10.4 LTS and below Read data using R on Databricks Runtime 11.3 LTS and above: df /", Read data using R on Databricks Runtime 10.4 LTS and below: df /",

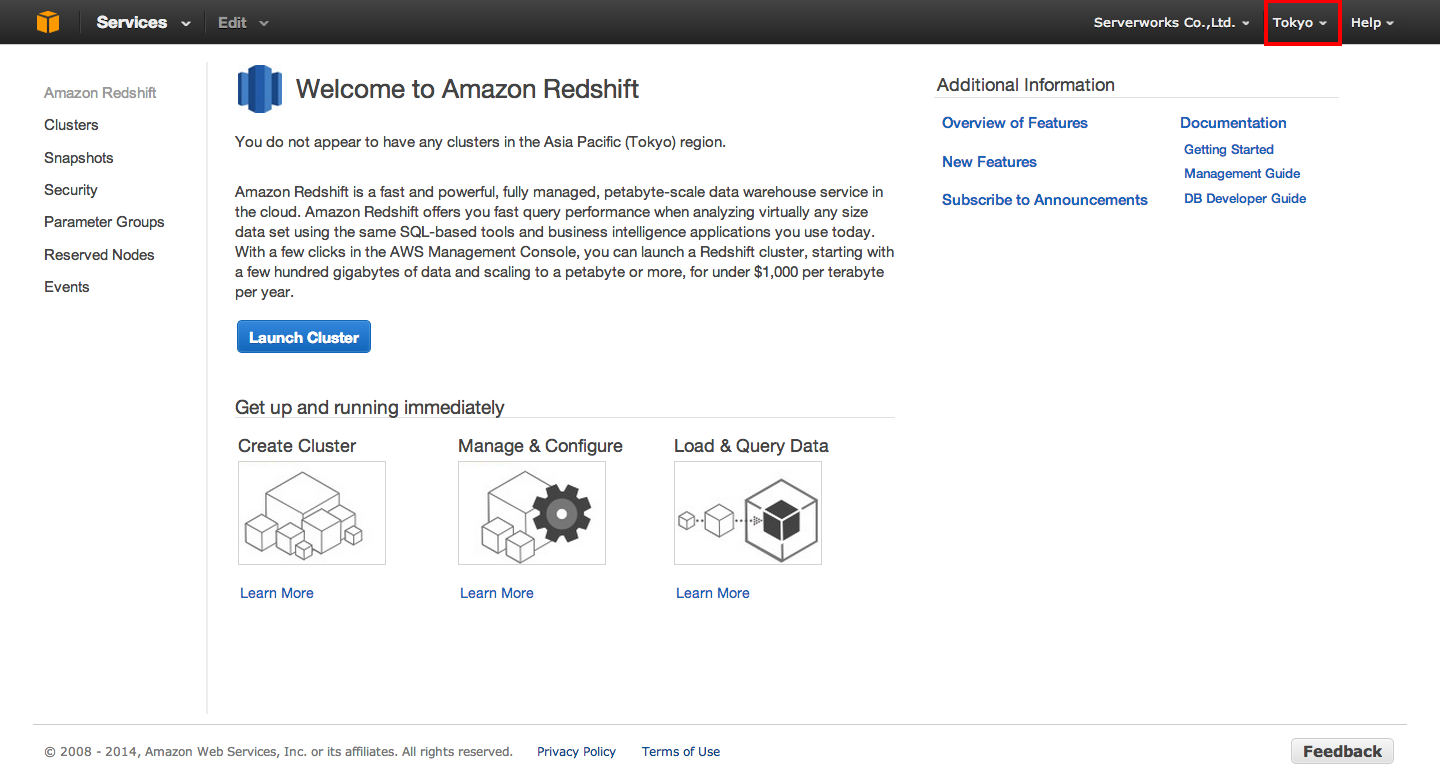

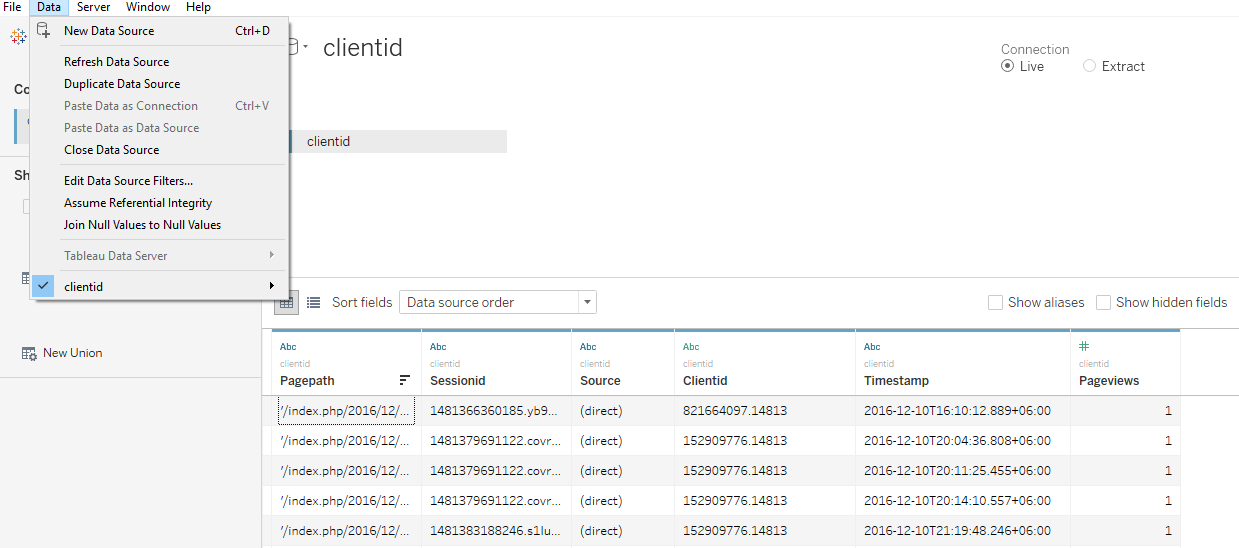

The SQL API supports only the creation of new tables and not overwriting or appending. Write data using SQL: DROP TABLE IF EXISTS redshift_table *./ĭbtable '.', /* if schema-name not provided, default to "public". Port '', /* Optional - will use default port 5439 if not specified. Read data using SQL on Databricks Runtime 11.3 LTS and above: Read data using SQL on Databricks Runtime 10.4 LTS and below: DROP TABLE IF EXISTS redshift_table # Write back to a table using IAM Role based authentication # the data source API to write the data back to another table # After you have applied transformations to the data, you can use option("query", "select x, count(*) group by x") option("dbtable", "schema-name.table-name") # if schema-name is not specified, default to "public". option("port", "port") # Optional - will use default port 5439 if not specified. # Read data from a table using Databricks Runtime 11.3 LTS and above option("forward_spark_s3_credentials", True) Python # Read data from a table using Databricks Runtime 10.4 LTS and below The data is available on the Data tab.External locations defined in Unity Catalog are not supported as tempdir locations. You can now query information from the tables exposed by the connection: Right-click a Table and then click Edit Table. Jdbc:redshift:User=admin Password=admin Database=dev Server=examplecluster.my. Port=5439 Either double-click the JAR file or execute the jar file from the command-line.įill in the connection properties and copy the connection string to the clipboard. On the Configuration tab for the cluster, copy the cluster URL from the connection strings displayed.įor assistance in constructing the JDBC URL, use the connection string designer built into the Redshift JDBC Driver.On the Clusters page, click the name of the cluster.You can obtain the Server and Port values in the AWS Management Console: Password: Set this to the password you want to use to authenticate to the Server.User: Set this to the username you want to use to authenticate to the Server.Or, leave this blank to use the default database of the authenticated user. Database: Set this to the name of the database.Port: Set this to the port of the cluster.Server: Set this to the host name or IP address of the cluster hosting the Database you want to connect to.To connect to Redshift, set the following: On the next page of the wizard, click the driver properties tab.Įnter values for authentication credentials and other properties required to connect to Redshift.In the Create new connection wizard that results, select the driver.In the Databases menu, click New Connection.Add jdbc:redshift: in the URL Template field.įollow the steps below to add credentials and other required connection properties.This will automatically fill the Class Name field at the top of the form. Click the Find Class button and select the RedshiftDriver class from the results.In the create new driver dialog that appears, select the file, located in the lib subfolder of the installation directory.In the Driver Name box, enter a user-friendly name for the driver.Click New to open the Create New Driver form.

Open the DBeaver application and, in the Databases menu, select the Driver Manager option.This article shows how to connect to Redshift data with wizards in DBeaver and browse data in the DBeaver GUI.Ĭreate a JDBC Data Source for Redshift Dataįollow the steps below to load the driver JAR in DBeaver. The CData JDBC Driver for Redshift implements JDBC standards that enable third-party tools to interoperate, from wizards in IDEs to business intelligence tools.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed